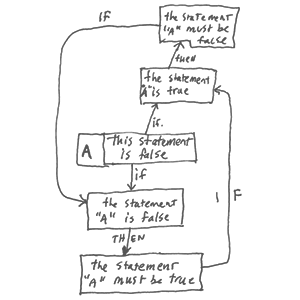

Gödel’s [ger-del] incompleteness theorems is the name given to two theorems, proved by Kurt Gödel in 1931. They are about limitations in all but the most trivial formal systems for arithmetic of mathematical interest. The theorems are very important for the philosophy of mathematics.

The idea behind the theorems is that some mathematical systems are not complete. Most people think they show that any attempt to find a complete and consistent set of axioms for all of mathematics (e.g. Hilbert’s program) is impossible.

read more »

Gödel’s Incompleteness Theorems

Teleology

Teleology [tel-ee-ol-uh-jee] is a philosophical idea that things have goals or causes. It is the ‘view that developments are due to the purpose or design which is served by them.’ An example would be Aristotle’s view of nature, later adopted by the Catholic Church. The word ‘teleological’ comes from the Ancient Greek ‘telos,’ which means ‘end’ or ‘purpose.’ A simpler example would be a tool such as the clock, which is designed by man to tell the time. Whether or not an entity (man or god) is needed to cause teleology to happen is one of the most important questions.

All cultures we know of have creation stories in their religions. However, much of science operates on the principle that the natural world is self-organizing. This applies particularly to astronomy and biology, which were once explained as the action of a deity, and are now seen as natural and automatically self-organizing. Cybernetics is the basic science of self-organizing systems. The general issue of whether the original sense of teleology applies to the natural world is still a matter of controversy between religion and science.

read more »

Molecular Gastronomy

Molecular gastronomy [ga-stron-uh-mee] is a subdiscipline of food science that seeks to investigate, explain and make practical use of the physical and chemical transformations of ingredients that occur while cooking, as well as the social, artistic and technical components of culinary and gastronomic phenomena in general. Molecular gastronomy is a modern style of cooking, which is practiced by both scientists and food professionals in many professional kitchens and labs and takes advantage of many technical innovations from the scientific disciplines.

The term ‘molecular gastronomy’ was coined in 1992 by late Oxford physicist Nicholas Kurti and the French INRA (a public research institute dedicated to agriculture) chemist Hervé This. Some chefs associated with the term choose to reject its use, preferring other terms such as ‘culinary physics’ and ‘experimental cuisine.’ There are many branches of food science, all of which study different aspects of food such as safety, microbiology, preservation, chemistry, engineering, physics, and the like. Until the advent of molecular gastronomy, there was no formal scientific discipline dedicated to studying the processes in regular cooking as done in the home or in a restaurant.

read more »

Modernist Cuisine

‘Modernist Cuisine: The Art and Science of Cooking’ is a 2011 cookbook by Nathan Myhrvold, Chris Young, and Maxime Bilet. The book is an encyclopedia and a guide to the science of contemporary cooking. Five volumes cover history and fundamentals, techniques and equipment, animals and plants, ingredients and preparation, plated dish recipes; the sixth volume is a kitchen manual.

Myhrvold has attended Ecole de Cuisine la Varenne, a cooking school in Burgundy, France and has also cooked part-time at Rover’s, a French restaurant in Seattle owned by Thierry Rautureau. He is also a scientist, having earned advanced degrees in geophysics, space physics, and theoretical and mathematical physics, done post-doctoral research with Stephen Hawking at Cambridge University, and worked for many years as the chief technology officer and chief strategist of Microsoft.

read more »

Mesolimbic Pathway

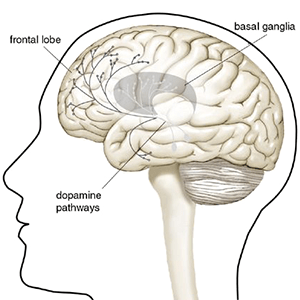

The mesolimbic pathway, sometimes referred to as the reward pathway, is a dopaminergic pathway in the brain. The pathway connects the ventral tegmental area, which is located in the midbrain, to the nucleus accumbens and olfactory tubercle, which are located in the ventral striatum.

The release of dopamine from the mesolimbic pathway into the nucleus accumbens regulates incentive salience (i.e., motivation and desire) for rewarding stimuli and facilitates reinforcement and reward-related motor function learning; it may also play a role in the subjective perception of pleasure. The dysregulation of the mesolimbic pathway and its output neurons in the nucleus accumbens plays a significant role in the development and maintenance of an addiction.

read more »

Sensitization

Sensitization [sen-si-tuh-zey-shuhn] is an example of non-associative learning (learning involving exposure to a single event) in which the progressive amplification of a response follows repeated administrations of a stimulus. An everyday example of this mechanism is a warm sensation followed by pain caused by constantly rubbing an arm. The pain is the result of the progressively amplified response of the nerve endings.

Sensitization is thought to underlie both adaptive as well as maladaptive learning processes in the organism. Sensitization refers to the process by which a cellular receptor becomes more likely to respond to a stimulus (more efficient). There are a several of different types of sensitization: long-term potentiation, kindling, central sensitization, and drug sensitization.

read more »

Habituation

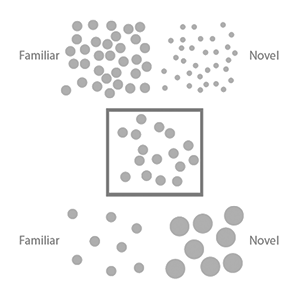

Habituation [huh-bich-oo-ey-shuhn] occurs when an animal responds less to repeated stimuli. It is a primitive kind of learning and a basic process of biological systems. Animals do not need conscious motivation or awareness for habituation to occur; it enables them to distinguish meaningful information from background stimuli. Habituation occurs in all animals, as well as in some large protozoans. The decrease in responding is specific to the habituated stimulus.

For example, if one was habituated to the taste of lemon, their responding would increase significantly when presented with the taste of lime (stimulus discrimination). Two factors that can influence habituation include the time between each stimulus, and the length of time the stimulus is presented. Shorter intervals and longer durations increase habituation, and vice versa.

read more »

Naïve Physics

Naïve physics or folk physics is the untrained human perception of basic physical phenomena. In the field of artificial intelligence the study of naïve physics is a part of the effort to formalize the common knowledge of human beings. Many ideas of folk physics are simplifications, misunderstandings, or misperceptions of well understood phenomena, incapable of giving useful predictions of detailed experiments, or simply are contradicted by more thorough observations.

They may sometimes be true, be true in certain limited cases, be true as a good first approximation to a more complex effect, or predict the same effect but misunderstand the underlying mechanism. Naïve physics can also be defined an intuitive understanding all humans have about objects in the physical world. Cognitive psychologists are delving deeper into these phenomena with promising results. Psychological studies indicate that certain notions of the physical world are innate in all of us.

read more »

Moralistic Fallacy

The moralistic fallacy is in essence the reverse of the naturalistic fallacy (defining the term ‘good’ in terms of one or more natural properties). The moralistic fallacy is the formal fallacy of assuming that what is desirable is found or inherent in nature. It presumes that what ought to be—something deemed preferable—corresponds with what is or what naturally occurs. What should be moral is assumed a priori to also be naturally occurring.

Cognitive scientist Steven Pinker writes that ‘The naturalistic fallacy is the idea that what is found in nature is good. It was the basis for Social Darwinism, the belief that helping the poor and sick would get in the way of evolution, which depends on the survival of the fittest. Today, biologists denounce the Naturalistic Fallacy because they want to describe the natural world honestly, without people deriving morals about how we ought to behave — as in: If birds and beasts engage in adultery, infanticide, cannibalism, it must be OK.’

read more »

Naturalistic Fallacy

The phrase ‘naturalistic fallacy‘ refers to the claim that what is natural is inherently good or right, and that what is unnatural is bad or wrong (‘appeal to nature’). It is the converse of the ‘moralistic fallacy,’ the notion that what is good or right is natural and inherent. The naturalistic fallacy is related to (and even confused with) Hume’s ‘is–ought problem,’ which examines the difference between descriptive statements (about what is) and prescriptive or normative statements (about what ought to be).

Another usage of ‘naturalistic fallacy’ was described by British philosopher G. E. Moore in his 1903 book ‘Principia Ethica.’ Moore stated that a naturalistic fallacy is committed whenever a philosopher attempts to prove a claim about ethics by appealing to a definition of the term ‘good’ in terms of one or more natural properties (such as ‘pleasant,’ ‘more evolved,’ ‘desired,’ etc.).

read more »

Chemophobia

Chemophobia literally means ‘fear of chemicals.’ It is most often used to describe the assumption that ‘chemicals’ (i.e., man-made products or artificially concentrated but naturally occurring chemicals) are bad and harmful, while ‘natural’ things (i.e., chemical compounds that occur naturally or that are obtained using traditional techniques) are good and healthy.

General chemophobia derives from incomplete knowledge of science, or a misunderstanding of science, and is a form of technophobia and fear of the unknown. In terms of chemical safety, ‘industrial,’ ‘synthetic’ ‘artificial,’ and ‘man-made’ do not necessarily mean damaging, and ‘natural’ does not necessarily mean better.

read more »

Fine-tuned Universe

The fine-tuned Universe is the proposition that the conditions that allow life in the Universe can only occur when certain universal fundamental physical constants lie within a very narrow range, so that if any of several fundamental constants were only slightly different, the Universe would be unlikely to be conducive to the establishment and development of matter, astronomical structures, elemental diversity, or life as it is presently understood.

The existence and extent of fine-tuning in the Universe is a matter of dispute in the scientific community.

read more »