The Shockwave Rider is a 1975 science fiction novel by John Brunner, notable for its hero’s use of computer hacking skills to escape pursuit in a dystopian future, and for the coining of the word ‘worm’ to describe a program that propagates itself through a computer network. It also introduces the concept of a ‘Delphi pool’ (a large group of people used as a statistical sampling resource), perhaps derived from the RAND Corporation’s Delphi method – a futures market on world events which bears close resemblance to DARPA’s controversial and cancelled Policy Analysis Market (dubbed the ‘Terrorism Market’ by the media).

The title derives from the futurist work ‘Future Shock’ by Alvin Toffler. The hero is a survivor in a hypothetical world of quickly changing identities, fashions, and lifestyles, where individuals are still controlled and oppressed by a powerful and secretive state apparatus. His highly developed computer skills enable him to use any public telephone to punch in a new identity, thus reinventing himself. As a fugitive, he must do this from time to time in order to escape capture. The title is also a metaphor for survival in an uncertain world.

The Shockwave Rider

Sidewise in Time

‘Sidewise in Time‘ is a science fiction short story by Murray Leinster that was first published in a 1934 issue of ‘Astounding Stories.’ In the story, professor Minott is a mathematician at Robinson College in Virginia who has determined that an apocalyptic cataclysm is fast approaching that could destroy the entire universe. The cataclysm manifests itself on June 5, 1935 (one year in the future in terms of the story’s original publication) when sections of the Earth’s surface begin changing places with their counterparts in alternate timelines.

A Roman legion from a timeline where the Roman Empire never fell appears on the outskirts of St. Louis, Missouri. Viking longships from a timeline where the Vikings settled North America raid a seaport in Massachusetts. A traveling salesman from Louisville, Kentucky finds himself in trouble with the law when he travels into an area where the South won the American Civil War. A ferry approaching San Francisco finds the flag of Czarist Russia flying from a grim fortress dominating the city. Continue reading

A Logic Named Joe

‘A Logic Named Joe‘ is a science fiction short story by Murray Leinster that was first published in a 1946 issue of ‘Astounding Science Fiction.’ The story actually appeared under Leinster’s real name, Will F. Jenkins, since the issue also included a story under the Leinster pseudonym ‘Adapter.’

The story is particularly noteworthy as a prediction of massively networked personal computers and their drawbacks, written at a time when computing was in its infancy. The story’s narrator is a ‘logic’ (much like a personal computer) repairman nicknamed Ducky. In the story, a logic whom he names ‘Joe’ develops some degree of sapience and ambition. Continue reading

Three Laws of Robotics

The Three Laws of Robotics are a set of rules devised by the science fiction author Isaac Asimov. The rules were introduced in his 1942 short story ‘Runaround,’ although they had been foreshadowed in a few earlier stories.

The Three Laws are: ‘A robot may not injure a human being or, through inaction, allow a human being to come to harm; A robot must obey the orders given to it by human beings, except where such orders would conflict with the First Law; and A robot must protect its own existence as long as such protection does not conflict with the First or Second Laws. These form an organizing principle and unifying theme for Asimov’s robotic-based fiction, appearing in his ‘Robot’ series, the stories linked to it, and his ‘Lucky Starr’ series of young-adult fiction. Continue reading

Roboethics

The term roboethics was coined by roboticist Gianmarco Veruggio in 2002, who also served as chair of an Atleier (workshop) funded by the European Robotics Research Network to outline areas where research may be needed. The road map effectively divided ethics of artificial intelligence into two sub-fields to accommodate researchers’ differing interests:

Machine ethics is concerned with the behavior of artificial moral agents (AMAs); and Roboethics is concerned with the behavior of humans, how humans design, construct, use and treat robots and other artificially intelligent beings. Continue reading

Machine Ethics

Machine Ethics is the part of the ethics of artificial intelligence concerned with the moral behavior of Artificial Moral Agents (AMAs) (e.g. robots and other artificially intelligent beings). It contrasts with roboethics, which is concerned with the moral behavior of humans as they design, construct, use and treat such beings.

In 2009, academics and technical experts attended a conference to discuss the potential impact of robots and computers and the hypothetical possibility that they could become self-sufficient and able to make their own decisions. They discussed the possibility and the extent to which computers and robots might be able to acquire any level of autonomy, and to what degree they could use such abilities to possibly pose any threat or hazard. Continue reading

AI Ethics

The ethics of artificial intelligence is the part of the ethics of technology specific to robots and other artificially intelligent beings. It is typically divided into Roboethics, a concern with the moral behavior of humans as they design, construct, use and treat artificially intelligent beings, and Machine Ethics, concern with the moral behavior of artificial moral agents (AMAs).

The term ‘roboethics’ was coined by roboticist Gianmarco Veruggio in 2002. It considers both how artificially intelligent beings may be used to harm humans and how they may be used to benefit humans. ‘Robot rights’ are the moral obligations of society towards its machines, similar to human rights or animal rights. These may include the right to life and liberty, freedom of thought and expression, and equality before the law. Continue reading

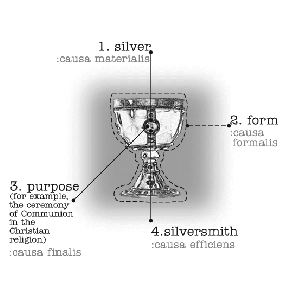

The Question Concerning Technology

For German philosopher Martin Heidegger broadly, the question of being formed the essence of his philosophical inquiry.

In ‘The Question Concerning Technology‘ (‘Die Frage nach der Technik’), Heidegger sustains this inquiry, but turns to the particular phenomenon of technology, seeking to derive the essence of technology and humanity’s role of being with it. Heidegger originally published the text in 1954, in ‘Vorträge und Aufsätze’ (‘Letters and Essays’).

Continue reading

Gestell

Gestell [gesh-tell] is a German word used by philosopher Martin Heidegger to describe what lies behind or beneath modern technology. This concept was applied to Heidegger’s exposition of the essence of technology.

The conclusion regarding the essence of technology was that technology is fundamentally enframing. As such, the essence of technology is Gestell. Indeed, ‘Gestell, literally ‘framing,’ is an all-encompassing view of technology, not as a means to an end, but rather a mode of human existence.’ Continue reading

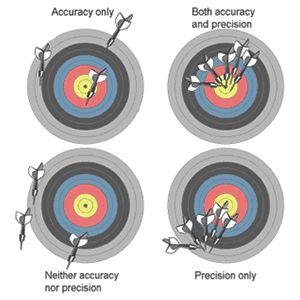

Accuracy and Precision

The accuracy of a measurement system is the degree of closeness of measurements of a quantity to that quantity’s actual value. The precision of a measurement system, also called reproducibility or repeatability, is the degree to which repeated measurements under unchanged conditions show the same results. Although the two words reproducibility and repeatability can be synonymous in colloquial use, they are deliberately contrasted in the context of the scientific method.

A measurement system can be accurate but not precise, precise but not accurate, neither, or both. For example, if an experiment contains a systematic error, then increasing the sample size generally increases precision but does not improve accuracy. The result would be a consistent yet inaccurate string of results from the flawed experiment. Eliminating the systematic error improves accuracy but does not change precision.

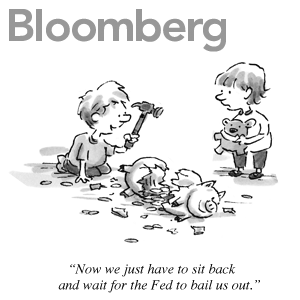

Moral Hazard

In economic theory, a moral hazard is a situation where the costs that could incur from a decision will not be felt by the party taking the risk. Knowing that the potential costs and/or burdens of taking such risk will be borne, in whole or in part, by others creates a moral hazard and invites high risk behavior.

For example, with respect to the originators of subprime loans, many may have suspected that the borrowers would not be able to maintain payments and that, for this reason, the loans were not, in the long run, going to be worth much. Still, because there were many buyers of these loans (or of pools of these loans) willing to take on that risk, the originators did not concern themselves with the potential long-term consequences of making these loans.

Too big to fail

‘Too big to fail‘ describes financial institutions that are so large and so interconnected that their failure is widely held to be disastrous to the economy, and which therefore must be supported by government when they face difficulty. The term was popularized by Congressman Stewart McKinney in a 1984 hearing discussing the FDIC’s intervention with a failing bank, Continental Illinois.

Proponents of this theory believe that the importance of some institutions means they should become recipients of beneficial financial and economic policies from governments or central banks. One of the problems that arises is moral hazard (where costs that could incur will not be felt by the party taking the risk), in this case companies insulated by protective policies will seek to profit by it, and take positions that are high-risk high-return, as they are able to leverage these risks based on the policy preference they receive. Continue reading