Positive illusions are unrealistically favorable attitudes that people have towards themselves. Positive illusions are a form of self-deception or self-enhancement that feel good, maintain self-esteem or stave off discomfort at least in the short term.

There are three broad kinds: inflated assessment of one’s own abilities (illusory superiority), unrealistic optimism about the future (optimism bias), and an illusion of control. The term ‘positive illusions’ originates in a 1988 paper. There are controversies about the extent to which people reliably demonstrate positive illusions, and also about whether these illusions are beneficial to the people who have them.

Positive Illusions

Self-deception

Self-deception is a process of denying or rationalizing away the relevance, significance, or importance of opposing evidence and logical argument. Self-deception involves convincing oneself of a truth (or lack of truth) so that one does not reveal any self-knowledge of the deception. Simple instances of self-deception include common occurrences such as: the alcoholic who is self-deceived in believing that his drinking is under control, the husband who is self-deceived in believing that his wife is not having an affair, the jealous colleague who is self deceived in believing that her colleague’s greater professional success is due to ruthless ambition.

A consensus on the identification of self-deception remains elusive to contemporary philosophers, the result of the term’s paradoxical elements and ambiguous paradigmatic cases. Self-deception also incorporates numerous dimensions, such as epistemology, psychological and intellectual processes, social contexts, and morality. As a result, the term is highly debated and occasionally argued to be an impossible phenomenon.

Motivated Tactician

The term ‘motivated tacticians‘ is used in social psychology to describe a human shifting from quick and dirty cognitively economical tactics to more thoughtful, thorough strategies when processing information depending on their type and degree of motivation. This idea has been used to explain why people use stereotyping, biases, and categorization in some situations and more analytical thinking in others. Because of the empirical evidence and robust nature, the concept is now a preferred theory of human social perception.

After much research on categorization, and other cognitive shortcuts, psychologists began to describe human beings as cognitive misers (i.e. they use a lot of mental shortcuts); which explains that a need to conserve mental resources causes people to use shortcuts to thinking about stimuli, instead of motivations and urges influencing the way humans think about their world. Continue reading

Cognitive Miser

Cognitive miser is a term which refers to the idea that only a small amount of information is actively perceived by individuals when making decisions, and many cognitive shortcuts (such as drawing on prior information and knowledge) are used instead to attend to relevant information and arrive at a decision. The term was coined in 1984 by Susan T. Fiske and Shelley E. Taylor in an early book on social cognition (thinking related to interpersonal relationships). In the area of psychology, perception is one of the base fields. It is defined as how one views the world, but is not necessarily an accurate interpretation of it.

A cognitive miser, therefore, refers to how people cannot possibly assimilate all the information they are bombarded with by the world. The mind will either take in relevant information into the conscious mind, or information that may be relevant to the subconscious mind. The information taken into the subconscious will later undergo an internal screening. Anything useful will be reinforced with ties to other areas where it is of use, anything not of use will typically be forgotten. Continue reading

Motivation

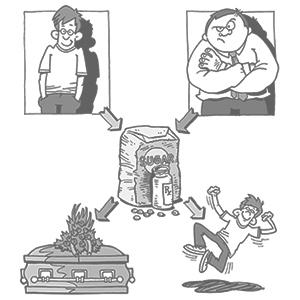

Motivation is the psychological feature that arouses an organism to action toward a desired goal and elicits, controls, and sustains certain goal directed behaviors. For instance: an individual has not eaten, he or she feels hungry, and as a response he or she eats and diminishes feelings of hunger. There are many approaches to motivation: physiological, behavioral, cognitive, and social. It is the crucial element in setting and attaining goals—and research shows that subjects can influence their own levels of motivation and self-control.

A 2007 paper, ‘Where the Motivation Resides and Self-Deception Hides: How Motivated Cognition Accomplishes Self-Deception,’ examined how people remain blind to the motives underlying their flattering self-construals, attitudes, and social judgments. Motivated cognition accomplishes the goal of self-deception. Self-serving conclusions are produced and the influence of such distortions remains hidden from conscious awareness because of the ubiquitous presence and specialized nature of motivated cognition.

Eugenics

Eugenics [yoo-jen-iks] is the study of hereditary improvement of the human race by controlled selective breeding. Eugenics rests on some basic ideas. The first is that what is true of animals is true of man. The characteristics of animals are passed on from one generation to the next in heredity, including mental characteristics. For example, the behavior and mental characteristics of different breeds of dog differ, and all modern breeds are greatly changed from wolves. The breeding and genetics of farm animals show that if the parents of the next generation are chosen, then that affects what offspring are born.

Negative eugenics aims to cut out traits that lead to suffering, by limiting people with the traits from reproducing. Positive eugenics aims to produce more healthy and intelligent humans, by persuading people with those traits to have more children. In the past, many ways were proposed for doing this, and even today eugenics means different things to different people. The idea of eugenics is controversial, because in the past it was sometimes used to justify discrimination and injustice against people who were thought to be genetically unhealthy or inferior. Continue reading

Tabula Rasa

Tabula rasa [tab-yuh-luh rah-suh] (Latin: ‘blank slate’) is the theory that individuals are born without built-in mental content and that their knowledge comes from experience and perception.

The theory was discussed by Aristotle, but popularized by John Locke (the father of liberalism) in the 17th century: ‘Let us then suppose the mind to be, as we say, white paper void of all characters, without any ideas. How comes it to be furnished? … To this I answer, in one word, from EXPERIENCE.’ Locke thought all knowledge came from sense data (smells, sights, sounds, pain, etc.), and that the mind is empty at birth. Locke’s idea was immediately picked up by others.

Psychological Nativism

In the field of psychology, nativism is the view that certain skills or abilities are ‘native’ or hard wired into the brain at birth. This is in contrast to empiricism, the ‘blank slate’ or tabula rasa view, which states that the brain has inborn capabilities for learning from the environment but does not contain content such as innate beliefs. Some nativists believe that specific beliefs or preferences are hard wired. For example, one might argue that some moral intuitions are innate or that color preferences are innate.

A less established argument is that nature supplies the human mind with specialized learning devices. This latter view differs from empiricism only to the extent that the algorithms that translate experience into information may be more complex and specialized in nativist theories than in empiricist theories. However, empiricists largely remain open to the nature of learning algorithms and are by no means restricted to the historical associationist mechanisms of behaviorism (which argued that the content of consciousness can be explained by the association and reassociation of irreducible sensory and perceptual elements).

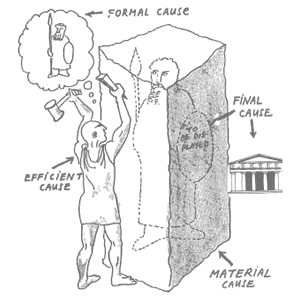

Causality

Causality is a way to describe how different events relate to one another. Suppose there are two events A and B. If B happens because A happened, then people say that A is the cause of B, or that B is the effect of A.

Aristotle looked at the problem of causality in his books ‘Posterior analytics’ and ‘Metaphysics’ he wrote: ‘All causes are beginnings; we have scientific knowledge when we know the cause; to know a thing’s nature is to know the reason why it is.’ This can be used to explain causality. Continue reading

Kurdaitcha

Kurdaitcha [ka-dai-tcha] (or kurdaitcha man) is a ritual ‘executioner’ in Australian Aboriginal culture. The ‘execution’ in this case is a complex ritual similar to voodoo hexes or pagan curses. The kurdaitcha ritual is a nocebo, a negative response to physically harmless stimuli.

Voodoo death, also known as psychosomatic death, is a term coined by Harvard physiologist Walter Cannon in 1942 to describe the phenomenon of sudden death as brought about by a strong emotional shock, such as fear. The word ‘kurdaitcha’ is also used by Europeans to refer to the shoes worn by the Kurdaitcha, woven of feathers and human hair and treated with blood. Continue reading

Voodoo Death

Voodoo death, a term coined by Harvard physiologist Walter Cannon in 1942 also known as psychogenic or psychosomatic death, is the phenomenon of sudden death as brought about by a strong emotional shock, such as fear. The anomaly is recognized as psychosomatic in that death is caused by an emotional response—often fear—to some suggested outside force.

Voodoo death is particularly noted in native societies, and concentration or prisoner of war camps, but the condition is not specific to any culture or mentality. Continue reading

Nocebo

In medicine, a nocebo [no-see-bo] reaction or response refers to harmful, unpleasant, or undesirable effects a subject manifests after receiving an inert dummy drug or placebo. Nocebo responses are not chemically generated and are due only to the subject’s pessimistic belief and expectation that the inert drug will produce negative consequences. In these cases, there is no ‘real’ drug involved, but the actual negative consequences of the administration of the inert drug, which may be physiological, behavioral, emotional, and/or cognitive, are nonetheless real.

An example of nocebo effect would be someone who dies of fright after being bitten by a non-venomous snake. The term ‘nocebo’ (Latin: ‘I will harm’) was chosen by Walter Kennedy, in 1961, to denote the counterpart of one of the more recent applications of the term placebo (Latin: ‘I will please’); namely, that of a placebo being a drug that produced a beneficial, healthy, pleasant, or desirable consequence in a subject, as a direct result of that subject’s beliefs and expectations. The term ‘nocebo’ can also refer to positive outcomes based upon the patient’s expectation of that outcome. Continue reading