And Now for Something Completely Different is a film spin-off from the television comedy series ‘Monty Python’s Flying Circus’ featuring favorite sketches from the first two seasons. The title was used as a catchphrase in the television show. The film, released in 1971, consists of 90 minutes of the best sketches seen in the first two series of the television show. The sketches were remade on film without an audience, and were intended for an American audience which had not yet seen the series. The announcer (John Cleese) uses the phrase ‘and now for something completely different’ several times during the film, in situations such as being roasted on a spit and lying on top of the desk in a small, pink bikini.

This was the Pythons’ first feature film, of sketches re-shot on an extremely low budget (and often slightly edited) for cinema release. Some famous sketches included are: the ‘Dead Parrot’ sketch, ‘The Lumberjack Song,’ ‘Upperclass Twits,’ ‘Hell’s Grannies,’ and the ‘Nudge Nudge’ sketch. Financed by Playboy’s UK executive Victor Lownes, it was intended as a way of breaking Monty Python in America, and although it was ultimately unsuccessful in this, the film did good business in the UK. The group did not consider the film a success, but it enjoys a cult following today.

And Now for Something Completely Different

Menger Sponge

In mathematics, the Menger [meng-er] sponge is a fractal curve. It is a universal curve, in that it has topological dimension one, and any other curve (more precisely: any compact metric space of topological dimension 1) is homeomorphic to some subset of it.

It is sometimes called the Menger-Sierpinski sponge or the Sierpinski sponge. It is a three-dimensional extension of the Cantor set and Sierpinski carpet. It was first described by Karl Menger (1926) while exploring the concept of topological dimension. The Menger sponge simultaneously exhibits an infinite surface area and encloses zero volume.

Methuselah Foundation

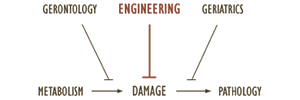

The Methuselah [muh-thoo-zuh-luh] Foundation studies methods of extending lifespan. It is a non-profit volunteer organization, co-founded by Aubrey de Grey and David Gobel, based in Virginia. Activities of the foundation include ‘My Bridge 4 Life,’ a community tool designed to help people deal with the different diseases of aging; the Mprize, a monetary prize given to anyone who efficiently rejuvenates and/or extends the healthy lifespan of mice, and various collaborative projects under the umbrella concept of MLife Sciences. The foundation takes it name from the Biblical character whose name is commonly used to refer to any living organism reaching great age.

In 2003, de Grey and Gobel cofounded The Mprize (known then as the ‘Methuselah Mouse Prize’), a prize designed to accelerate research into effective life extension interventions by awarding monetary prizes to researchers who extend the healthy lifespan of mice to unprecedented lengths. Regarding this, de Grey stated in 2005, ‘if we are to bring about real regenerative therapies that will benefit not just future generations, but those of us who are alive today, we must encourage scientists to work on the problem of aging.’ The prize is currently $4 million. The foundation believes that if reversing of aging can be exhibited in mice, an enormous amount of funding would be made available for similar research in humans, potentially including a massive government project similar to the Human Genome Project, or by private for-profit companies.

Superintelligence

A superintelligence is a hypothetical entity which possesses intelligence surpassing that of any existing human being. Superintelligence may also refer to the specific form or degree of intelligence possessed by such an entity. The highest ranges of Intelligence are evaluative. The possibility of superhuman intelligence is frequently discussed in the context of artificial intelligence. Increasing natural intelligence through genetic engineering or brain-computer interfacing is a common motif in futurology and science fiction.

Collective intelligence is often regarded as a pathway to superintelligence or as an existing realization of the phenomenon. Superintelligence is defined as an ‘intellect that is much smarter than the best human brains in practically every field, including scientific creativity, general wisdom and social skills.’ The definition does not specify the means by which superintelligence could be achieved: whether biological, technological, or some combination. Neither does it specify whether or not superintelligence requires self-consciousness or experience-driven perception.

Omega Point

Omega Point is a term coined by the French Jesuit Pierre Teilhard de Chardin (1881–1955) to describe a maximum level of complexity and consciousness towards which he believed the universe was evolving.

In this theory, developed by Teilhard in ‘The Future of Man’ (1950), the universe is constantly developing towards higher levels of material complexity and consciousness, a theory of evolution that Teilhard called the Law of Complexity/Consciousness. For Teilhard, the universe can only move in the direction of more complexity and consciousness if it is being drawn by a supreme point of complexity and consciousness. Continue reading

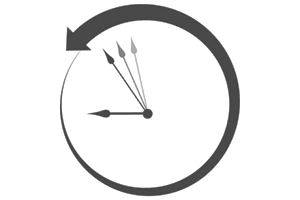

Accelerating Change

In futures studies and the history of technology, accelerating change is a perceived increase in the rate of technological (and sometimes social and cultural) progress throughout history, which may suggest faster and more profound change in the future. While many have suggested accelerating change, the popularity of this theory in modern times is closely associated with various advocates of the technological singularity (the emergence of greater-than-human intelligence through technological means), such as Vernor Vinge and Ray Kurzweil.

In 1938, Buckminster Fuller introduced the word ephemeralization to describe the trends of ‘doing more with less’ in chemistry, health and other areas of industrial development. In 1946, Fuller published a chart of the discoveries of the chemical elements over time to highlight the development of accelerating acceleration in human knowledge acquisition. In 1958, Stanisław Ulam wrote in reference to a conversation with John von Neumann: One conversation centered on the ever accelerating progress of technology and changes in the mode of human life, which gives the appearance of approaching some essential singularity in the history of the race beyond which human affairs, as we know them, could not continue.’ Continue reading

Second Half of the Chessboard

The rice and chessboard problem is a mathematical problem: If a chessboard were to have rice placed upon each square such that one grain were placed on the first square, two on the second, four on the third, and so on (doubling the number of grains on each subsequent square), how many grains of rice would be on the chessboard at the finish? The answer is 18,446,744,073,709,551,615, which would be a heap of rice larger than Mount Everest.

This problem (or a variation of it) demonstrates the quick growth of exponential sequences. In technology strategy, ‘the second half of the chessboard’ is a phrase, coined by Ray Kurzweil, in reference to the point where an exponentially growing factor begins to have a significant economic impact on an organization’s overall business strategy. While the number of grains on the first half of the chessboard is large, the amount on the second half is vastly larger. The first square of the second half alone contains more grains than the entire first half.

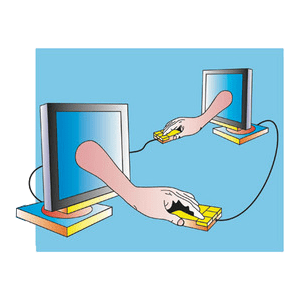

Metcalfe’s Law

Metcalfe’s law states that the value of a telecommunications network is proportional to the square of the number of connected users of the system. First formulated in this form by George Gilder in 1993, and attributed to Robert Metcalfe in regard to Ethernet, Metcalfe’s law was originally presented, circa 1980, not in terms of users, but rather of ‘compatible communicating devices’ such as telephones.

Only more recently with the launch of the internet did this law carry over to users and networks, in line with its original intent. The law has often been illustrated using the example of fax machines: a single fax machine is useless, but the value of every fax machine increases with the total number of machines in the network, because the total number of people with whom each user may send and receive documents increases. Likewise, in social networks, the greater number of users with the service, the more valuable the service becomes to the community.

Reversible Computing

Reversible computing is a model of computing where the computational process to some extent is reversible, i.e., time-invertible. There are two major, closely related, types of reversibility that are of particular interest for this purpose: physical reversibility and logical reversibility. A process is said to be physically reversible if it results in no increase in physical entropy; it is isentropic.

These circuits are also referred to as charge recovery logic or adiabatic computing. Although in practice no nonstationary physical process can be exactly physically reversible or isentropic, there is no known limit to the closeness with which we can approach perfect reversibility. The motivation for the study of technologies aimed at actually implementing reversible computing is that they offer what is predicted to be the only potential way to improve the energy efficiency of computers beyond the fundamental von Neumann-Landauer limit.

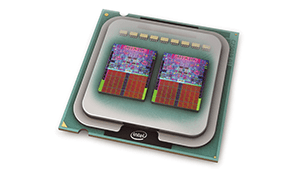

Multi-core Processor

A multi-core CPU is a single computing component with two or more independent actual processors (called ‘cores’), which are the units that read and execute program instructions. Multiple cores can run multiple instructions at the same time, increasing overall speed for programs amenable to parallel computing.

Processors were originally developed with only one core. After a certain point, multi-processor techniques are no longer efficient, largely because of issues with congestion in supplying instructions and data to the many processors. The threshold is roughly in the range of several tens of cores; above this threshold network on chip technology is advantageous. Tilera processors feature a switch in each core to route data through an on-chip mesh network to lessen the data congestion, enabling their core count to scale up to 100 cores. Continue reading

Embarrassingly Parallel

In parallel computing, an embarrassingly parallel workload is one for which little or no effort is required to separate the problem into a number of parallel tasks. This is often the case where there exists no dependency (or communication) between those parallel tasks.

They are easy to perform on server farms which do not have any of the special infrastructure used in a true supercomputer cluster. They are thus well suited to large, internet based distributed platforms such as BOINC. A common example of an embarrassingly parallel problem lies within graphics processing units (GPUs) for tasks such as 3D projection, where each pixel on the screen may be rendered independently.

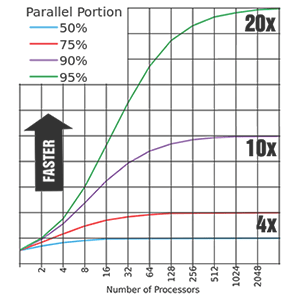

Amdahl’s Law

Amdahl’s law, named after computer architect Gene Amdahl, is used to find the maximum expected improvement to an overall system when only part of the system is improved. It is often used in parallel computing to predict the theoretical maximum speedup using multiple processors. The speedup of a program using multiple processors in parallel computing is limited by the time needed for the sequential fraction of the program. For example, if a program needs 20 hours using a single processor core, and a particular portion of 1 hour cannot be parallelized, while the remaining promising portion of 19 hours (95%) can be parallelized, then regardless of how many processors we devote to a parallelized execution of this program, the minimum execution time cannot be less than that critical 1 hour. Hence the speedup is limited up to 20x.

Amdahl’s law is often conflated with the law of diminishing returns (the tendency for a continuing application of effort or skill toward a particular project or goal to decline in effectiveness after a certain level of result has been achieved). Amdahl’s law does represent the law of diminishing returns if you are considering what sort of return you get by adding more processors to a machine, if you are running a fixed-size computation that will use all available processors to their capacity. Each new processor you add to the system will add less usable power than the previous one. Each time you double the number of processors the speedup ratio will diminish, as the total throughput heads toward the limit. This analysis neglects other potential bottlenecks such as memory bandwidth and I/O bandwidth, if they do not scale with the number of processors; however, taking into account such bottlenecks would tend to further demonstrate the diminishing returns of only adding processors.