Gesture recognition is a topic in computer science and language technology with the goal of interpreting human gestures via mathematical algorithms. Gestures can originate from any bodily motion or state but commonly originate from the face or hand. Current focuses in the field include emotion recognition from the face and hand gesture recognition. Many approaches have been made using cameras and computer vision algorithms to interpret sign language.

However, the identification and recognition of posture, gait, proxemics (culture-specific, personal boundaries), and human behaviors is also the subject of gesture recognition techniques. Gesture recognition can be seen as a way for computers to begin to understand human body language, offering richer interaction between machines and humans than that afforded by a mouse and keyboard.

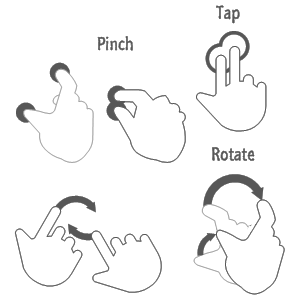

In computer interfaces, two types of gestures are distinguished: online gestures, which can also be regarded as direct manipulations like scaling and rotating; and offline gestures, which are usually processed after the interaction is finished; e. g. a circle is drawn to activate a context menu. Pointing has a very specific purpose in most cultures, to reference an object or location based on its position relative to ourselves. The use of gesture recognition to determine where a person is pointing is useful for identifying the context of statements or instructions. This application is of particular interest in the field of robotics. Controlling a computer through facial gestures is a useful application of gesture recognition for users who may not physically be able to use a mouse or keyboard. Eye tracking in particular may be of use for controlling cursor motion or focusing on elements of a display.

Wired gloves can provide input to the computer about the position and rotation of the hands using magnetic or inertial tracking devices. Furthermore, some gloves can detect finger bending with a high degree of accuracy (5-10 degrees), or even provide haptic feedback to the user, which is a simulation of the sense of touch. Depth-aware cameras, such as structured light or time-of-flight cameras, can generate a depth map at a short range, and use this data to approximate a 3D representation of what is being seen. Stereo cameras use two cameras whose relations to one another are known, a 3D representation can be approximated by the output of the cameras. A normal camera can also be used for gesture recognition where the resources/environment would not be convenient for other forms of image-based recognition. Earlier it was thought that single camera may not be as effective as stereo or depth aware cameras, but a start-up based out of Palo Alto named Flutter is challenging this theory. It has released an app that could be downloaded to by any windows/mac computer with built-in webcam, thus, allowing an accessibility to a wider audience.

With controller-based gestures the controller acts as an extension of the body so that when gestures are performed, some of their motion can be conveniently captured by software. Mouse gestures are one such example, where the motion of the mouse is correlated to a symbol being drawn by a person’s hand, as is the Wii Remote, which can study changes in acceleration over time to represent gestures. Devices such as the LG Electronics Magic Wand, the Loop and the Scoop use Hillcrest Labs’ Freespace technology, which uses MEMS accelerometers, gyroscopes and other sensors to translate gestures into cursor movement. The software also compensates for human tremor and inadvertent movement.

The Daily Omnivore

Everything is Interesting

Leave a comment