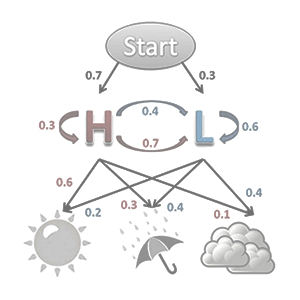

In mathematics, a Markov [mahr-kahv] chain, named after Russian mathematician Andrey Markov (1856 – 1922), is a discrete (finite or countable) random process with the Markov property (the memoryless property of a stochastic [random] process). A discrete random process means a system which can be in various states. The system also changes randomly in discrete steps. It can be helpful to think of the system as evolving through discrete steps in time, although strictly speaking the ‘step’ may have nothing to do with time.

A stochastic process has the Markov property if the conditional probability distribution of future states of the process depends only upon the present state, not on the sequence of events that preceded it. (Given two jointly distributed random variables X and Y, the conditional probability distribution of Y given X is the probability distribution of Y when X is known to be a particular value.) The term ‘Markov assumption’ is used to describe a model where the Markov property is assumed to hold, such as a hidden Markov model (in which the system being modeled is assumed to be a Markov process with unobserved [hidden] states).

read more »

Markov Chain

Stochastic Process

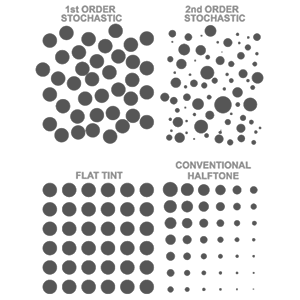

In the mathematics of probability, a stochastic [stuh-kas-tik] process is a random function, such as stock market and exchange rate fluctuations; signals such as speech, audio, and video; medical data such as a patient’s EKG, EEG, blood pressure, or temperature; and random movement such as Brownian motion (random moving of particles suspended in a fluid) or random walks (random, computer generated paths).

Other examples of random fields include static images, random topographies (landscapes), or composition variations of an inhomogeneous material. The stochastic process is the probabilistic counterpart to deterministic systems (in which no randomness is involved in the development of future states of the system). Instead of describing a process which can only evolve in one way, in a stochastic or random process there is some indeterminacy: even if the initial condition (or starting point) is known, there are several (often infinitely many) directions in which the process may evolve.