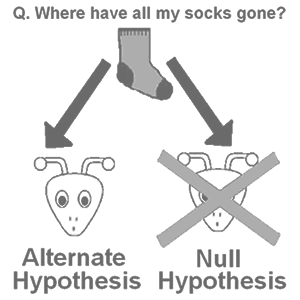

In statistics, a null hypothesis is the ‘no-change’ or ‘no-difference’ hypothesis. The term was first used by English geneticist Ronald Fisher in his book ‘The design of experiments.’ A hypothesis is a proposed explanation for some event or problem. Every experiment has a null hypothesis. If you do an experiment to see if a medicine works, the null hypothesis is that it doesn’t work.

If you do an experiment to see if people like chocolate or vanilla ice-cream better, the null hypothesis is that people like them equally. If you do an experiment to see if either boys or girls can play piano better, the null hypothesis is that boys and girls are equally good at playing the piano. The opposite of the null hypothesis is the alternative hypothesis (a difference does exist: this medicine makes people healthier, people like chocolate ice-cream better than vanilla, or boys are better at playing the piano than girls).

read more »

Null Hypothesis

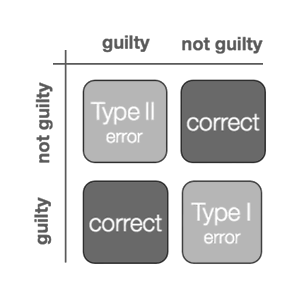

Type I and Type II Errors

In statistics, Type I and type II errors are errors that happen when a coincidence occurs while doing statistical inference, which gives you a wrong conclusion. A Type I error is saying the original question is false, when it is actually true (e.g. a jury finding an innocent person guilty, a ‘false positive’); a Type II error is saying the original question is true, when it is actually false (e.g. a jury finding a guilty person not guilty, a ‘false negative’ or simply a ‘miss’).

Usually a type I error leads one to conclude that a thing or relationship exists when really it doesn’t: for example, that a patient has a disease being tested for when really the patient does not have the disease, or that a medical treatment cures a disease when really it doesn’t. Examples of type II errors would be a blood test failing to detect the disease it was designed to detect, in a patient who really has the disease; or a clinical trial of a medical treatment failing to show that the treatment works when really it does.

read more »