In statistics, a null hypothesis is the ‘no-change’ or ‘no-difference’ hypothesis. The term was first used by English geneticist Ronald Fisher in his book ‘The design of experiments.’ A hypothesis is a proposed explanation for some event or problem. Every experiment has a null hypothesis. If you do an experiment to see if a medicine works, the null hypothesis is that it doesn’t work.

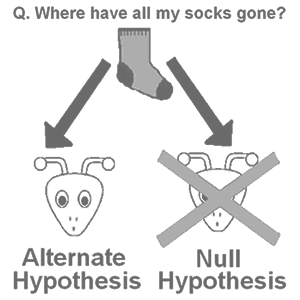

If you do an experiment to see if people like chocolate or vanilla ice-cream better, the null hypothesis is that people like them equally. If you do an experiment to see if either boys or girls can play piano better, the null hypothesis is that boys and girls are equally good at playing the piano. The opposite of the null hypothesis is the alternative hypothesis (a difference does exist: this medicine makes people healthier, people like chocolate ice-cream better than vanilla, or boys are better at playing the piano than girls).

The practice of science involves formulating and testing hypotheses, statements that are capable of being proven false using a test of observed data. The null hypothesis typically corresponds to a general or default position (e.g. there is no relationship between two measured phenomena or that a potential treatment has no effect). Its opposite, the alternative hypothesis, asserts a particular relationship does exist between the phenomena. The alternative need not be the logical negation of the null hypothesis; it predicts the results from the experiment if the alternative hypothesis is true. The null hypothesis can never be proven. Data, such as the results of an observation or experiment, can only reject or fail to reject a null hypothesis. For example, if comparison of two groups (such as subjects treated with a medication and untreated subjects) reveals no statistically significant difference between the two, it does not prove that there really is no difference; it only shows that the results were not sufficient to reject the null hypothesis.

Hypothesis testing works by collecting data and measuring how likely the particular set of data is, assuming the null hypothesis is true. If the data-set is very unlikely, defined as being part of a class of sets of data that only rarely will be observed, the experimenter rejects the null hypothesis concluding it (probably) is false. If the data do not contradict the null hypothesis, then only a weak conclusion can be made; namely that the observed data set provides no strong evidence against the null hypothesis. As the null hypothesis could be true or false, in this case, in some contexts this is interpreted as meaning that the data give insufficient evidence to make any conclusion, on others it means that there is no evidence to support changing from a currently useful regime to a different one. For instance, a certain drug may reduce the chance of having a heart attack. Possible null hypotheses are ‘this drug does not reduce the chances of having a heart attack’ or ‘this drug has no effect on the chances of having a heart attack.’ The test of the hypothesis consists of administering the drug to half of the people in a study group as a controlled experiment. If the data show a statistically significant change in the people receiving the drug, the null hypothesis is rejected.

Consider the question of whether a tossed coin is fair (i.e. that on average it lands heads up 50% of the time). A potential null hypothesis is ‘this coin is not biased towards heads.’ The experiment is to repeatedly toss the coin. A possible result of 5 tosses is 5 heads. Under this null hypothesis, the data are considered unlikely (with a fair coin, the probability of this is 3% and the result would be even more unlikely if the coin were biased in favor of tails). The data refute the null hypothesis (that the coin is either fair or biased towards tails) and the conclusion is that the coin is biased towards heads. Alternatively, the null hypothesis, ‘this coin is fair’ could be examined by looking out for either too many of tails or too many heads, and thus the types of outcomes that would tend to contradict this null hypothesis are those where a large number of heads or a large number of tails are observed. Thus a possible diagnostic outcome would be that all tosses yield the same outcome, and the probability of 5 of a kind is 6% under the null hypothesis. This is not statistically significant, preserving the null hypothesis in this case.

This example illustrates that the conclusion reached from a statistical test may depend on the precise formulation of the null and alternative hypotheses. The example data set demonstrates the point, but is actually too small to support either conclusion. Generally, fewer than 30 trials puts any ‘yes-or-no’ conclusion at risk. The example also illustrates one hazard of hypothesis testing, that of multiple testing (when one considers a set of statistical inferences simultaneously, or infers on selected parameters only, where the selection depends on the observed values). In practice, using a single dataset to evaluate or test a large number of different null hypotheses that are in fact true will lead to erroneous conclusions unless appropriate corrections are made to the testing procedure. Increasing the number of different tests made on a single dataset, without accounting for the number of tests being conducted, increases the number of these that would suggest that the corresponding null hypothesis should be rejected. This hazard becomes important in the context of data mining. This topic is often discussed under the heading of, ‘testing hypotheses suggested by the data.’

Statistical hypothesis testing involves performing the same experiment on multiple subjects. The number of subjects is known as the sample size. The properties of the procedure depends on the sample size. Even if a null hypothesis does not hold for the population, an insufficient sample size may prevent its rejection. If sample size is under a researcher’s control, a good choice depends on the statistical power of the test, the effect size that the test must reveal and the desired significance level. The significance level is the probability of rejecting the null hypothesis when the null hypothesis holds in the population. The statistical power is the probability of rejecting the null hypothesis when it does not hold in the population (i.e., for a particular effect size).

The Daily Omnivore

Everything is Interesting

Leave a comment